A Finnish farmer checks the weather on her phone six times a day. Temperature, rain probability, wind speed, collapsed into a glance that shapes what she does before breakfast. The data behind that glance comes from NOAA, a US federal agency whose $7.1 billion budget funds weather satellites, ocean monitoring, and the world’s largest forecasting operation. It comes from a European forecasting centre that runs the most accurate weather models on Earth. And from a chain of standardisation, regulation, and institutional infrastructure built over 150 years across two continents.

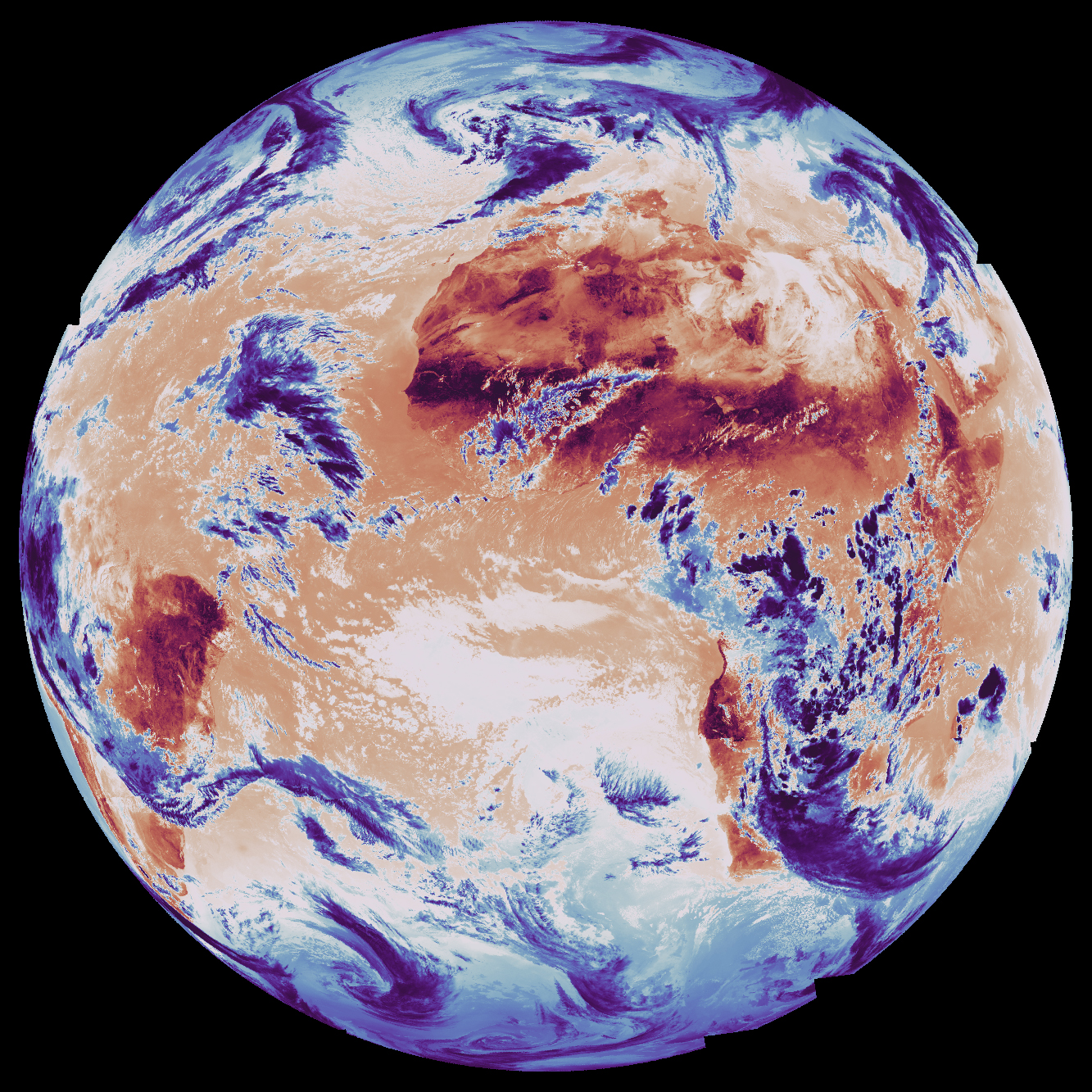

Three hundred kilometres above her field, a satellite images her soil every six days. The radar measures soil moisture with a precision no ground sensor matches. The data is free, open, and sitting on a European server. She has never seen it. No app delivers it. No system feeds it into her irrigation decisions. The satellite might as well not exist.

This gap between what Earth observation (EO) satellites collect and what anyone does with it has persisted for decades. The industry has spent those decades convinced that one more technological breakthrough will close it. First it was open data. Then new data formats. Then AI. Each time, the same assumption: we are one innovation away from the moment satellite data becomes as embedded in daily decisions as weather.

The assumption is wrong, and we have 150 years of receipts from the domain next door proving it.

Six conditions, not one

Technology mattered for weather. Of course it did. Satellites, computer models, supercomputers, data formats: each generation expanded what was possible. But technology alone never created the transition from expert-only weather charts to something a child checks on a phone.

That required six conditions, built simultaneously, mostly by governments. Open data. Binding standards. Centralised infrastructure. Interpretation so simple anyone can act on it. Data pipelines feeding automatically into specific industries. And regulatory mandates that forced all of this to happen.

The US discovered these over a century and a half. The Weather Bureau was created in 1870 because the government recognised that weather observation was a public good no private entity would provide at scale. Open data came early: US federal data has been public domain since the founding, so weather observations were free from day one.

But free data alone did nothing. For decades, weather information was useful to trained meteorologists and nobody else. Each condition required deliberate institutional action.

The international body governing civil aviation made weather briefings mandatory for all flights. Not optional. Not “recommended best practice.” Airlines that didn’t comply didn’t fly. That single mandate created an entire aviation weather infrastructure. Once the infrastructure existed, expanding what flowed through it cost almost nothing. Turbulence forecasts, volcanic ash alerts, space weather warnings: they all rode the same pipeline.

The World Meteorological Organization fought for years in the 1990s to force the principle that essential weather data must be exchanged freely between nations. This was a political battle against agencies, including several European weather services, that sold data commercially and resisted giving it away. The principle won because the alternative, fragmentation, was operationally intolerable for forecasting.

Data format standardisation was imposed, not adopted voluntarily. The WMO’s machine-readable format became the global standard because it was mandated. When the transition to a new version required retiring old formats, the WMO set a hard cutoff date. Agencies that hadn’t converted were cut off. That is how you achieve standardisation.

Interpretation simplification was the breakthrough that created mass adoption. Weather went from “500mb geopotential height anomaly” (a phrase that means something to atmospheric scientists and nobody else) to “it’s going to rain tomorrow.” This was an institutional design choice: the US National Weather Service decided its job was to serve the public, not just other meteorologists, and rebuilt its entire product suite accordingly.

Centralised infrastructure made it work at scale. The US government’s observation systems, forecast models, and data pipelines function as a platform others build on. The $2.5-3.5 billion commercial weather services market exists because this public platform exists. AccuWeather, The Weather Company, DTN, StormGeo: not one of them builds satellites. They build interpretation and sector-specific products on top of publicly funded infrastructure.

The value sits in translation, not in observation hardware.

Europe took a different path to the same destination. The European Centre for Medium-Range Weather Forecasts, ECMWF, achieved what no national service could alone: a single institution with the computing power to build the world’s most accurate forecast. But Europe locked its data behind paywalls for thirty years. The result: a technically superior system with a commercially stunted ecosystem.

It took two decades of EU legislation to finally free European weather data. Different path. Same lesson: remove any one of the six conditions and the system doesn’t work.

The mirror

Now hold those six conditions up to Earth observation.

Open data: partially met. The EU’s Copernicus programme provides free satellite data. The US Landsat programme dropped fees in 2008; peak annual sales had been 19,000 scenes, and within a year downloads hit one million. By 2017, annual downloads exceeded 20 million. Real progress. But commercial high-resolution data, the kind most useful for decisions, remains expensive and proprietary.

Binding standards: emerging. The industry has built one widely adopted cataloguing standard (STAC) and is starting to standardise how you request new satellite images. Matt Hanson at Element 84 recently made a compelling case for a standardised ordering system that would let software request satellite imagery the way it currently searches archives. The fragmentation he describes is real: one satellite operator has one kind of interface, another has a completely different one, a third wants you to email a shapefile to a sales engineer. He’s solving a genuine problem. It is one condition out of six.

Centralised infrastructure: fragmented. Copernicus comes closest but it splits across six separate services run by different organisations. Google and Microsoft provide powerful analytical platforms, but they are commercial products, not public infrastructure.

Interpretation simplification: absent. This is the biggest gap and almost nobody is working on it. Weather went from expert jargon to language anyone can act on. Earth observation hasn’t made that leap. The data still arrives in forms that require specialist knowledge to interpret. Nobody has built the equivalent of a weather app for satellite data. Not because it’s technically impossible, but because the institutional infrastructure that would make it meaningful doesn’t exist.

Automated data pipelines into specific industries: almost nonexistent. Weather flows automatically into energy grids, aviation, and insurance. Earth observation has pilot projects. The single real exception: the EU’s Common Agricultural Policy now mandates satellite-based monitoring for farm subsidy compliance, replacing farmer self-reporting and random physical inspections. That it took an EU regulation to create one such pipeline tells you everything about the state of the other five conditions.

Regulatory mandates: the critical absence. No international aviation body equivalent mandates EO data for any sector. No regulator requires satellite-derived information in operational decisions. Voluntary principles exist. Nobody enforces them. The EU’s recent regulation classifying certain public data as “high-value” (meaning it must be free and machine-readable) covers both weather and Earth observation data on paper. In practice, it frees up national-level environmental datasets. It does nothing to mandate that any institution act on what those datasets reveal.

The value chain is inverted

The commercial consequences of these absences are visible in every satellite company’s finances.

Planet Labs, the poster child of the commercial satellite revolution, reported $244 million in revenue last year. Government customers, defence, intelligence, and civil agencies account for roughly two-thirds of that revenue. Defence and intelligence alone grew over 30% year-over-year. The commercial sector, the market that was supposed to be the growth engine, remains the smallest segment and has faced headwinds.

This is not a Planet problem. It’s structural.

Compare this to weather. The commercial weather services market is worth $2.5-3.5 billion globally and sits almost entirely in the interpretation layer. Companies that don’t build satellites built billion-dollar businesses translating free public data into sector-specific decisions.

In weather, the money is overwhelmingly in what you DO with the data, not in collecting it. In Earth observation, that ratio is inverted. Billions flow into satellite hardware. The interpretation layer is thin and struggling.

Without the six conditions creating a downstream market, investment goes to the only thing you can build without institutional infrastructure: more satellites. And since no decision infrastructure exists on the receiving end, those satellites sell to the only buyers who already have their own interpretation capacity: military and intelligence agencies. That is the one place where the full chain, from observation to analysis to decision, already exists independently.

The commercial satellite race, the competition on image sharpness, revisit frequency, and sensor type, is a structural symptom. When there is no functioning demand infrastructure, the only way to compete is on supply-side specifications. Nobody in commercial weather competes on satellite resolution. The value is in interpretation, and the six conditions created the market for that.

Commercial EO satellites are not the problem. Government satellites are slow: one major European satellite took a decade from concept to orbit, involving sixty companies, for a single instrument. Commercial operators provide real advantages in speed, agility, and flexibility.

Those advantages land precisely where interpretation infrastructure already exists: government defence and intelligence. And only there. Until regulations force an insurer to use satellite-derived flood exposure the way aviation regulations force airlines to use weather briefings, commercial satellites have exactly one functioning market.

The industry keeps searching for the one lever that will trigger the inflection. A decade ago it was open data. Downloads exploded; the downstream market didn’t. Then new formats and cloud platforms, which genuinely made data more accessible. Now AI: foundation models, autonomous agents, uncertainty quantification. Each lever works. Each is real progress on an individual condition.

Weather needed all six simultaneously.

Workshops won’t write regulations

The pattern repeats in the institutions that should know better. Three-day workshops producing PDF roadmaps and framework diagrams. Innovation labs running ideas competitions and hosting visiting researchers. Research sprints winning awards for AI models that can flag their own uncertainty. All genuine. All insufficient.

After years of workshops, roadmaps, and frameworks: no institutional change, no regulatory proposals, no automated pipelines built into any industry.

The most revealing case sits inside ECMWF itself. For over a decade, the same institution that proved the six conditions work for weather has been running the Copernicus Atmosphere Monitoring Service: continuous global air quality monitoring, greenhouse gas tracking, pollution forecasts. The data is free. The formats are standardised. The infrastructure is world-class. It even offers simplified products: air quality indices, forecasts, support for smartphone apps.

After ten years, it still hasn’t become embedded the way weather has. How many Europeans check their air quality forecast daily? Where is the automated link to city traffic management, hospital staffing for respiratory wards, or school decisions about outdoor activities?

This service proves that even ECMWF, even with five of six conditions largely met, cannot create adoption without regulatory force. No EU regulation mandates that cities act on air quality forecasts. No directive requires hospitals to adjust staffing based on pollution predictions.

Five conditions aren’t enough. You need all six.

The second problem

And the story gets harder.

Suppose EO achieves all six conditions. Suppose regulatory will, institutional reform, and commercial innovation create the infrastructure that weather built over 150 years. What does that infrastructure deliver data into?

Institutions designed for a planet that no longer exists.

Weather’s own success proves this. Weather data flows perfectly into decision frameworks that assume the climate is stable. Flood maps built on historical rainfall records systematically underestimate risk as the climate changes. European building codes calculate structural loads, how much wind or snow a building must withstand, using historical measurements that no longer reflect reality.

The European Central Bank found 60% of banks had no framework for stress-testing climate risk. Agricultural subsidy systems still reference historical crop yields as baselines.

These institutions don’t lack data. They receive weather data perfectly well. The delivery problem was solved. The logic on the receiving end wasn’t. Every one of these frameworks assumes the past reliably predicts the future. When the climate was stable, that assumption was invisible. Now it produces confident wrong answers.

Better data flowing into a wrong framework doesn’t fix the framework. It makes the wrong answers more precise.

Earth observation faces both problems at once. It hasn’t achieved the first shift: building the six conditions that turn raw data into decision infrastructure. And even if it does, it faces a second shift that even weather hasn’t managed: changing institutional logic from backward-looking to forward-looking.

A few domains solved both simultaneously.

Energy is the clearest case. When wind and solar power grew large enough to matter, grid operators couldn’t schedule renewable generation the way they scheduled coal plants. Operators in the US and Europe didn’t bolt weather data onto their old scheduling systems. They rebuilt scheduling around real-time weather forecasting. The forecast IS the schedule. The old logic was replaced.

Parametric insurance is another. Traditional insurance prices risk from historical loss tables: what happened in the past in places like this. Parametric products pay out when a measured condition crosses a threshold: rainfall above X millimetres, wind above Y speed. The institution stopped asking “what happened historically” and started asking “what is happening right now, measured by instruments.”

Aviation is a third. Onboard flight computers don’t present weather data to a pilot for interpretation against experience. They ingest wind, temperature, and turbulence data and continuously recompute the optimal flight path. The decision system was designed around real-time data from the start.

The pattern across all three: the old institutional logic wasn’t patched with better data. It was replaced by new logic built around continuous measurement. And regulation was the forcing function every time.

Reinforcement, not compliance

This reframes what Earth observation needs. Not another roadmap. Not another workshop. Not another foundation model.

Regulatory mandates designed to replace institutional logic, not just add data to old systems. The difference matters.

“Insurers should consider satellite data” produces compliance: a consultant hired, a box checked, nothing changed. “Flood insurance pricing must reflect current observed exposure, updated annually from satellite data, with methodology audited against actual losses” produces a different institution. The system’s logic gets rebuilt around continuous observation.

That is the difference between improving a system and recognising that the system itself is the problem.

The difference also matters because of how reinforcement works. A mandate designed for compliance creates an obligation. A mandate designed to replace logic creates self-reinforcing loops.

When insurance pricing accurately reflects satellite-observed risk, properties where risk genuinely decreased get lower premiums. Successful flood defences, better land management: the satellite observes the improvement, the premium drops. That rewards investment in risk reduction. Property owners and municipalities start wanting more satellite-based pricing, not less. The regulation starts the loop. The economics sustain it.

Imagine what that looks like in practice. Your insurance app tells you: “This property’s flood exposure increased 14% since last year.” A farmer’s irrigation system receives: “Your field needs water Thursday; soil moisture dropped below the threshold overnight.” A municipal engineer gets an alert: “This building’s snow load rating is no longer within safety margins based on observed accumulation trends.” Not “here is a satellite image,” not “download this GeoTIFF.” Decisions. In language anyone can act on. The same leap weather made from 500mb geopotential height charts to “bring an umbrella.”

That world isn’t technically impossible. Every piece of data behind those sentences exists today. What doesn’t exist is the institutional infrastructure that would turn satellite observations into those sentences at scale, or the regulatory mandate that would force the systems receiving them to act.

Weather discovered these reinforcement loops by accident. Free data created commercial services that became a political force defending free data. Simplified interpretation created mass usage that made weather services politically untouchable. Mandates created infrastructure whose sunk cost made expanding the mandates cheap. Each loop reinforced the others. The system became self-sustaining.

Earth observation could design them deliberately. The EU’s satellite-based farm monitoring system is the proof of concept: a mandate that simultaneously created delivery infrastructure and replaced institutional logic. Self-reporting became continuous observation. Once member states build that pipeline, routing additional mandates through it, environmental compliance and carbon verification become marginal costs.

One sector isn’t a system. Insurance is the obvious next candidate: the economic pain of mispriced climate risk is acute and growing, the satellite data exists, the interpretation is more tractable than most EO applications, and European insurance regulators have the authority.

The honest caveat: weather’s regulatory breakthroughs didn’t happen because someone made a good argument. The 1900 Galveston hurricane killed 8,000 people and created a political window. Two world wars made weather forecasting a military necessity. Aviation growth created an industry that needed weather integration and pressured regulators to mandate it.

Regulation followed crisis, economic pressure, or the visible cost of doing nothing. Every time.

So the question isn’t whether someone will eventually mandate satellite data in insurance, building codes, and climate risk assessment. As the cost of backward-looking logic becomes more visible, and it does with every flood, every wildfire, every mispriced policy, the political pressure builds. The question is whether the EO industry waits for its own Galveston moment or makes the case before the catastrophe forces it.

The binding constraint isn’t technical

The EO industry’s favourite activity, convening experts to produce frameworks, is itself a symptom of the problem it needs to solve. The Group on Earth Observations has been running since 2005: twenty years of data-sharing principles that nobody enforces. The World Economic Forum projects $3.8 trillion in EO value with zero institutional mechanism to realise it. Research labs produce excellent science that changes no institutions. Innovation programmes connect people and ideas, while the regulations that would make those connections matter remain unwritten.

It is not true that nobody in the EO ecosystem is trying to build institutional infrastructure. People are trying. The attempts either fail politically, stay voluntary, or address one narrow use case.

The EU’s proposed Forest Monitoring Law was the clearest test. It would have mandated satellite-based data collection across all EU forests: standardised, geo-referenced, harmonised. In October 2025, the European Parliament voted it down, 370 to 261. MEPs called it excessive bureaucracy. One critic pointed out that by rejecting obligations for geo-referenced satellite data on tree cover loss, the Parliament had made early detection of threats almost impossible. The one explicit attempt to make satellite data mandatory for environmental monitoring in Europe was killed by the same Parliament that built Copernicus.

The EU Deforestation Regulation comes closest to a regulatory forcing function, but even it hedges. It requires geolocation verification of supply chains; it does not specifically mandate satellite data. It creates a market for EO services without creating the institutional infrastructure that weather has.

Internationally, GEO coordinates 103 member governments, but it is voluntary and technical, not political. It does not commit governments to anything. When NASA’s budget gets cut, GEO cannot intervene. When Sentinel-1B failed and Europe lost half its open SAR capacity for nearly three years, there was no framework to invoke. No backup triggered. The gap isn’t coordination. It’s authority.

None of these efforts are wrong. All of them are insufficient. The insufficiency has the same root: technologists solving technology problems in a domain where the binding constraint is governance.

The institutional anatomy makes this visible. ESA builds satellites. It has a record €22 billion budget. It has zero authority over how anyone uses the data. EUSPA manages the EU’s space programmes operationally and is tasked with “downstream market development.” It also cannot write regulations. The European Commission can propose regulations, and in June 2025 it proposed the EU Space Act: harmonised licensing, debris mitigation, cybersecurity requirements for satellite operators. Supply-side regulation. It governs the hardware. It says nothing about mandating that anyone use the data collected by those satellites.

The bodies that build and operate the observation system (ESA, EUSPA, DG DEFIS) have no authority over insurance pricing, building codes, flood risk assessment, or agricultural policy. Those belong to different directorates, different agencies, different national regulators. The people who build the satellites don’t write the rules for the people who should use the data. And the people who write rules for insurance and buildings aren’t thinking about satellites. When the Commission tried to bridge that gap with the Forest Monitoring Law, mandating satellite data on the demand side, organised interests killed it in Parliament. €22 billion to build the observation system. Zero regulatory authority to ensure it changes a single decision on the ground.

Weather didn’t become decision infrastructure through sharper satellites or faster models or smarter formats. Those things helped. They were not enough. What closed the gap was institutional infrastructure that enforced standardisation, mandated openness, simplified interpretation, and embedded data into the systems where decisions are made. Technology was necessary. The institutions that channelled it into decisions were what made it sufficient.

Earth observation has the technology. The satellites work. The data is, in many cases, free. The formats are improving. The AI is getting better.

What it doesn’t have is the institutional infrastructure that would make any of that matter at scale. And the ecosystem’s attempts to build it keep failing: voted down, left voluntary, or scoped so narrowly they change nothing structural. The binding constraint isn’t technical. It’s regulatory. And regulation isn’t a technology problem.

Until someone with regulatory authority decides that backwards-looking institutional logic is no longer acceptable when forward-looking satellite observation exists, the Finnish farmer will keep checking her weather app. And the satellite overhead will keep imaging her field for nobody.