Every institution you interact with — your bank, your insurer, your local government, the company that employs you — was designed for a planet that no longer exists.

Your 30-year mortgage assumes the land will be there in 30 years. Your insurance premium assumes this year’s flood is an anomaly, not a trend. Your city’s infrastructure plan assumes the rainfall patterns of the last century. Your pension fund assumes the coastal real estate in its portfolio will hold value through your retirement.

These aren’t failures of data or analysis. They’re features of a paradigm.

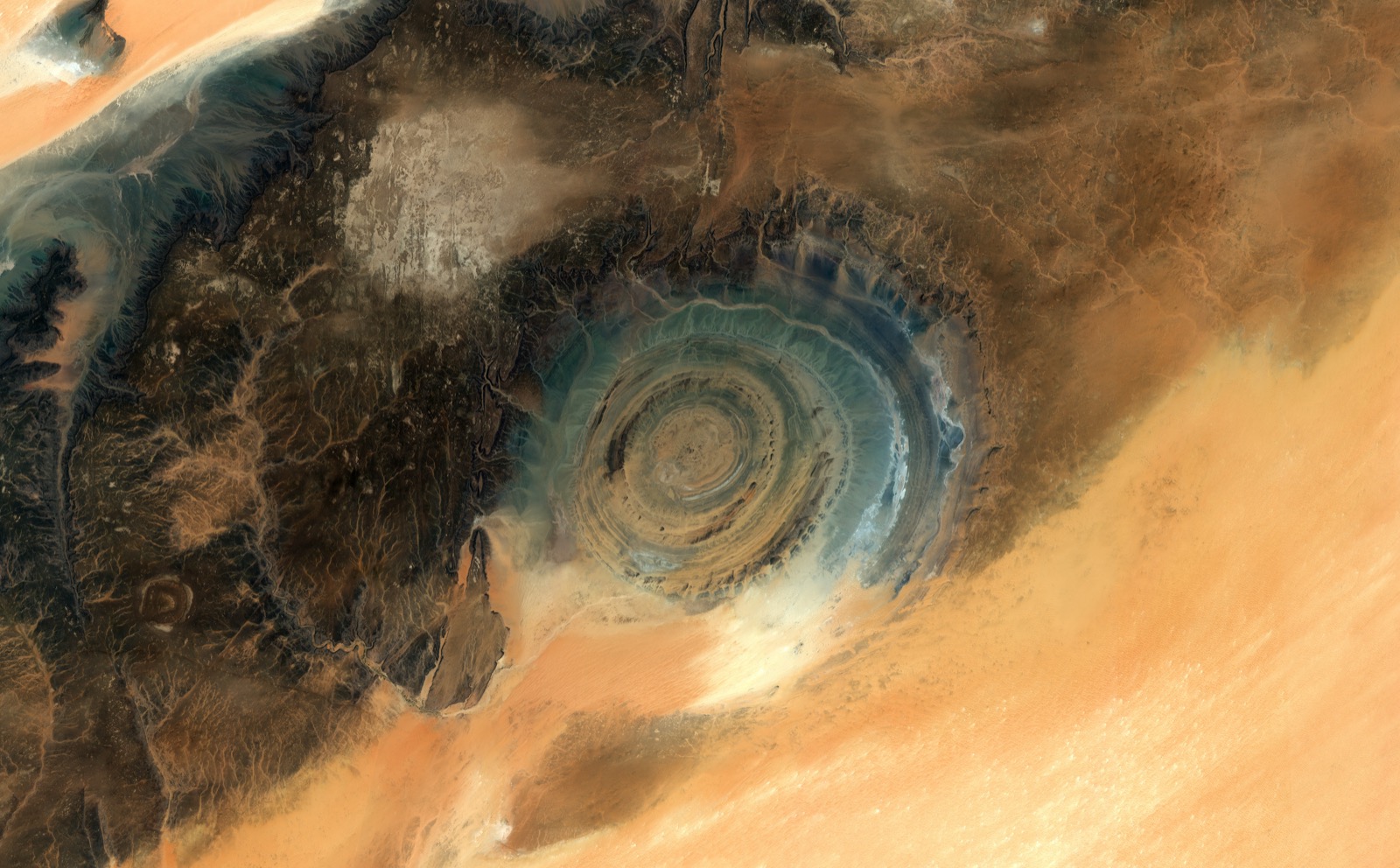

What the satellites see

Earth observation has been surfacing anomalies for decades. Coastlines retreating faster than models predict. Ice sheets losing mass at accelerating rates. Aquifers depleting on trajectories outside historical patterns. Growing zones migrating. Permafrost thawing where the models said it shouldn’t — yet.

The data is now precise enough, frequent enough, and covers enough years to show that the assumption underneath every major institution — that the physical planet is essentially stable, that variability is cyclical, that historical patterns predict future conditions — is not merely wrong but structurally failing. Not in isolated locations. Systematically.

And now AI has arrived. NASA and IBM built Prithvi, an open-source geospatial foundation model. Google DeepMind released AlphaEarth, producing 1.4 trillion numerical fingerprints per year — one for every 10-by-10-meter patch of the planet. Planet partnered with Anthropic to apply large language models to satellite imagery. Over 70 geospatial foundation models with more than 100 million parameters now exist.

AI is a genuine paradigm shift. The ability to ask a natural language question of planetary data and get an answer changes who can ask, what they can ask, and how fast understanding moves. A city planner who couldn’t read radar satellite imagery can now ask “show me subsidence risk across my district” and get an answer in seconds. A farmer can ask “what’s happening to my soil” and see it. A family buying a home can ask what the satellite record says about the land they’re investing their life savings in.

That’s real. And it’s new.

But look at how it’s being deployed. Every announcement uses the same language. Planet and Anthropic: “turn satellite imagery into actionable insights.” Google Earth AI: “actionable insights grounded in real-world understanding.” Deloitte and the World Economic Forum: “transformation of vast reams of raw EO measurements into actionable insights.” NASA and IBM: actionable insights. Every press release. Every pitch deck. Every partnership announcement. Actionable insights. Actionable insights. Actionable insights.

Actionable within what framework? Based on what assumptions about the world?

Nobody asks. The phrase itself reveals the assumption: we have an existing decision architecture, we just need better inputs. The machine is fine. Feed it better fuel.

The epicycle problem

There’s a name for what’s happening. Thomas Kuhn called it a paradigm crisis — the moment when observations can no longer be absorbed by the framework designed to make sense of them.

During a crisis, practitioners don’t abandon the paradigm. They double down. They add complexity. Ptolemy’s astronomers, faced with planetary orbits that didn’t match their circular model, didn’t question the model. They added epicycles — circles upon circles upon circles — each one technically brilliant, each one improving prediction accuracy, and each one burying the real problem deeper.

The model was wrong. The epicycles made it wronger in a way that was harder to see.

AI in earth observation is the most sophisticated epicycle ever built. It transforms how we see the planet. And it delivers that transformed seeing into institutional frameworks that can only absorb information confirming the planet they were designed for.

Models are not reality

Atmospheric physics teaches you this early, if you’re paying attention: every model is an approximation. The map is not the territory. The model is not the planet.

Every model draws boundaries. To make the math work, to keep things manageable, because you can’t model everything. Those boundaries encode assumptions. And those assumptions are valid — until they’re not. The moment you forget the boundaries are there, you’re not doing science. You’re doing faith.

Google’s AlphaEarth was trained on data from 2017 to 2024. Seven years of a planet that was already changing. The model’s implicit promise is that the relationships it learned — between land cover and flood risk, between vegetation patterns and agricultural viability, between surface temperature and infrastructure stress — will hold. That’s an assumption, not a fact. It’s not documented anywhere in the product.

A recent study called REOBench tested large geospatial AI models against routine image disruptions — cloud cover, haze, compression artefacts, brightness shifts — and found accuracy drops of up to 20%. If the models stumble on clouds, imagine what happens when the planet itself moves outside the patterns they were trained on. That’s not a failure mode. That’s what climate change means.

This applies equally to the institutional models the AI feeds into. Insurance pricing, infrastructure planning, agricultural yield forecasting — each one draws boundaries, encodes assumptions, and produces outputs that look like reality but aren’t. Useful fictions, until the conditions they were calibrated for change enough to make them dangerous fictions.

And it applies to any new model we build to replace them. The value isn’t in finding the right model. There is no right model. The value is in knowing you’re always using one, and having the discipline to ask: what does this model assume? Where are the boundaries? What would break it?

A model with visible boundaries is a tool you use knowingly. A model with invisible boundaries is a trap you fall into while thinking you’re making progress.

The field knows this. A research paper on responsible AI for earth observation acknowledges that transparency requires understanding and communicating “ambiguities, potential biases, and errors, or conceptual limitations.” The same paper notes that “assuring explainability continues to be challenging.” Translation: we know the models hide their boundaries and we have no idea how to fix it.

When the questions stop making sense

Kuhn’s most unsettling idea is this: competing paradigms aren’t just different answers to the same questions. They’re different questions entirely.

Within the current institutional paradigm, the question is: “How do we more accurately price flood risk for this coastal property portfolio?” AI solves it better than humans. Foundation models process more data, faster, with fewer errors. Progress is real.

But the satellite record isn’t providing a better answer to that question. It’s revealing that the question is wrong.

When flood frequency triples in a decade and the trajectory is steepening, the paradigm response is: update the model, adjust the pricing, recalibrate the risk. But the insurance paradigm assumes risk is distributable — that floods are events, not trends, and that the system absorbs them as random, independent occurrences. When the data shows the system itself is destabilising, “distributable risk” stops being a coherent concept. “How do we price this risk?” becomes meaningless the way “how many epicycles does Mars need?” became meaningless after Copernicus.

A satellite shows coastal infrastructure built on land subsiding at 3cm per year. The paradigm question: “What’s the adjusted asset value?” The question from outside: “What does it mean for a financial system to hold assets on land that is physically disappearing?” One fits in a spreadsheet. The other doesn’t fit in any existing institutional category.

Building intentionally

I spent years building climate adaptation products. The conversation was always the same: “Where does this data fit in the customer’s existing workflow?” It sounds pragmatic. It sounds customer-centric. It’s a paradigm question. It assumes the existing workflow is the right frame and just needs better inputs.

The question I wish I’d asked sooner: “What does this data tell us about whether the workflow makes sense?”

The most powerful move isn’t replacing one framework with another. It’s knowing that every framework — including the one you’re building — is a model with boundaries. And building accordingly: with visible assumptions, and a built-in way of recognising when the world has changed enough that the system no longer works.

What would that look like in practice? At every joint in the pipeline, the system makes visible: this model assumes X. This trigger was calibrated to Y conditions. This recommendation holds while Z remains true. And here is the satellite evidence on whether Z remains true. The person at the end — the city planner, the insurer, the farmer, the family — gets not just the recommendation but the conditions under which that recommendation stops being valid.

Nobody builds infrastructure with a self-destruct switch. But infrastructure that knows its own limits would have exactly that: not a dramatic shutdown, but a continuous signal. These are the assumptions this system rests on. This is what the satellite data says about whether those assumptions still hold. When they don’t, this is where the system stops being trustworthy.

The AI that builds the pipeline matters. The AI that surfaces the boundaries matters equally. The technology can do both. What determines which one gets built is the mental model of the people building it.

Build the infrastructure and build it knowing it’s temporary. Serve current decision-makers and show them the limits of their decisions. There’s no market for this. No RFP for “show us where our own thinking breaks.”

But the data exists. The AI exists. And the planet — the actual planet, not any model of it — isn’t waiting for our institutions to catch up.

What we build from that awareness determines whether Earth Observation becomes the most sophisticated system of epicycles ever constructed, or something that helps us live on the planet we actually have.